Control Google Cloud Storage Costs: Intelligent Data Management for MSPs

Key takeaways for IT leaders

Every mid-market IT team and MSP I talk to is wrestling with the same operational problem: data is growing, budgets aren’t, and cloud bills—especially around Google Cloud storage and egress—are unpredictable. Teams try to bolt existing backup and storage habits onto GCP buckets, spin up copies for each project, and treat the cloud like another tape library. The result is sprawl, surprise invoices, and a higher risk profile when compliance or restores are required.

Traditional storage approaches fail here because they were built for a different era. On‑prem appliance thinking—snapshot everything, keep multiple full copies, refresh hardware on a fixed cycle—translates poorly to Google Cloud, where every GB stored, moved, or read has a cost and where cross-region copies multiply exposure. Native cloud tools solve some problems but leave lifecycle discipline, cost-aware placement, and multi-tenant control to manual processes or fragile scripts.

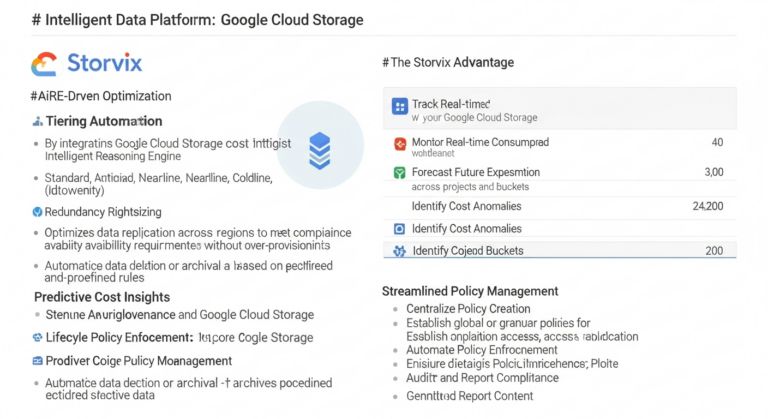

The practical strategic shift is toward an intelligent data platform that understands lifecycle, cost, and compliance constraints and enforces them consistently. Platforms such as STORViX give you policy-driven tiering and placement, automated retention and disposal, and controls for egress and immutable retention—so you can run predictable operations on Google Cloud, reduce unnecessary copies, and keep auditability and recovery SLAs without ballooning costs. That’s not hype; it’s about turning an uncontrolled variable (data growth) into a manageable line item.

Do you have more questions regarding this topic?

Fill in the form, and we will try to help solving it.